Search In Files & Multi-Threading

Kate has since years a very nifty search in files plugin. It allows to do multi-file searches and replacements.

Together with the projects plugin, I use this plugin daily, it is great!

But, whereas it was very useful already since the first release and got polished a lot in the last years, it is by far not the fastest file search out there. Let's be honest: for example Visual Studio Code is fast, we are slow, that is bad :=P

For the 21.04 release, Kåre Särs (the original author & maintainer of the plugin), Waqar Ahmed and me tried to rectify this.

First step: Model-View architecture

In the 20.12 release, the plugin still fills all data into a QTreeWidget.

There is already some background thread for the actual search, but this is then still very awkwardly filled directly into the widget.

Kåre ported this to a proper model-view abstraction here. This was the foundation for the actual plan: not just one search thread.

Afterwards, Waqar did more fine-tuning here. This already yields a much improved search performance, thought we still use one background thread for the full search.

In my January 2021 summary post (too bad, still January now...) I already mentioned the nice improvements. The visual representation of the results got some nice facelift, too.

Second step: Multiple background threads

Kåre did then the next step: allow multiple background search threads using a QThreadPool with some QRunnable objects.

Interesting enough, for his experiments, only two threads yielded a real benefit, more threads were contra productive.

Given I have a machine with a lot of real cores (16) and a fast SSD, I tried to experiment more with this.

I don't believe two threads can full saturate either the cores nor the SSD.

Third step: Optimize the threading

This did lead to the third step: try to optimize the multi-threading and scale over 2 threads.

I first just tried the current implementation on my faster machine, and yes, 2 threads were really more or less the sweet spot, even with 16 or 32 threads, it got only a little faster.

This was interesting.

One idea was: In our initial approach, we just divided the files we want to search in into X chunks. I thought perhaps that was not well balance.

Therefore I just added a simple work list were the individual workers pull their work from, without any initial static split up.

Unfortunately, that didn't really improve the results.

More threads just didn't help.

Time for the next step: less guessing, more profiling ;=)

Actually, that should have been step one, but hey, a bit guessing is always nice, who doesn't think he knows the solution without any concrete idea what the actual problem is.

perf for the win

As profiling data set I used a linux.git clone and searched there for "Linus", yes, very creative ;=)

That did take ~9 seconds with 2 threads and ~8 seconds with 16 threads. As said, not what I would have expected.

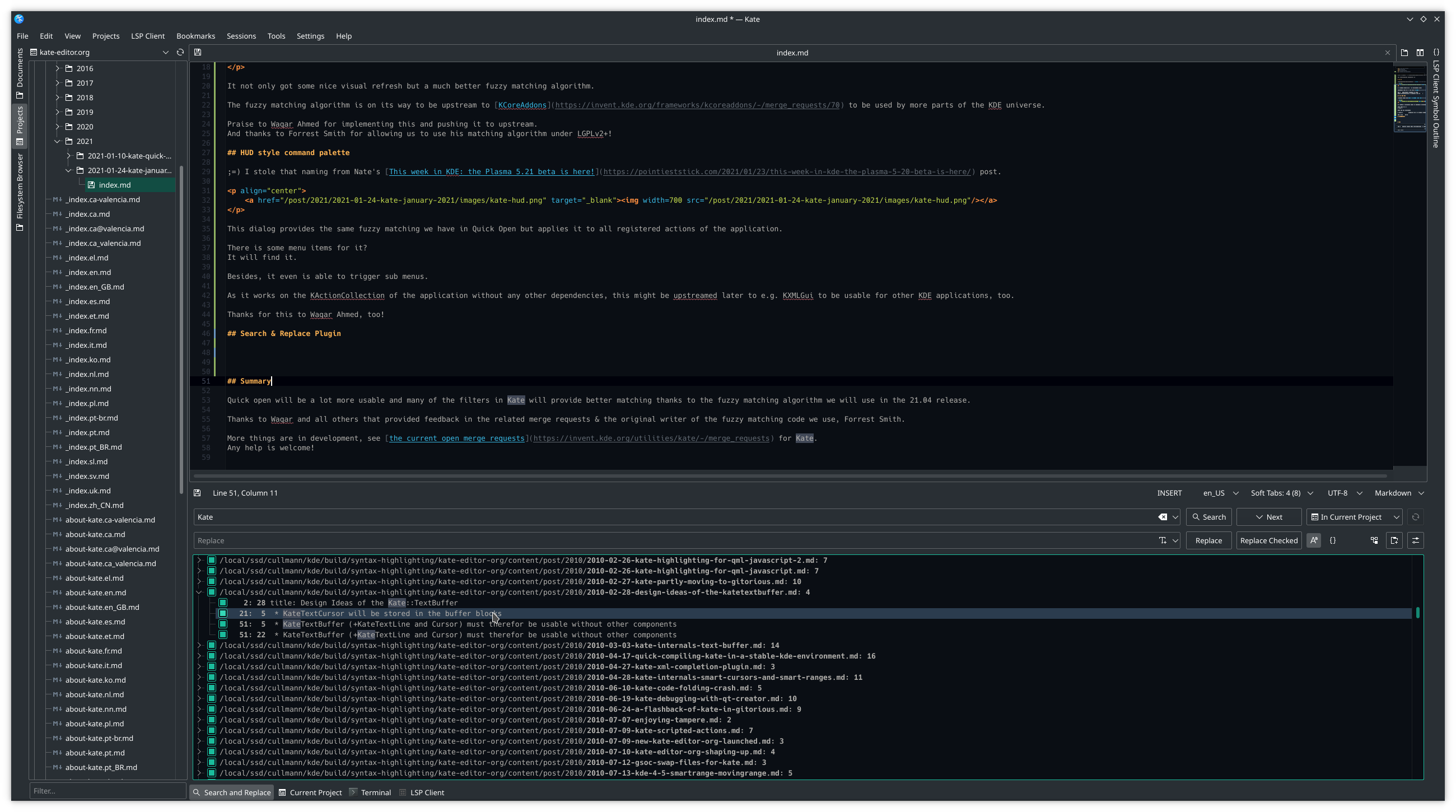

Let's take a look at the perf profile for a run with ideal thread count of 16.

What does directly look strange?

- QMimeData is visible, with something around 15% => bad, it is just just to sort out binary files, not something you would want to pay that much time for

- Why is that much time spend in locking?

After a bit reading of Qt docs & code, it was obvious:

QRegularExpression is a copy-on-write implicit shared object. If you e.g. pass the expression from the main thread to the runnables, it will not be copied. But that means, on most actions (like match), it will do internal locking, as e.g. match wants to optimize the expression that is still shared between the instances.

A simple fix: kill the sharing, see here, the old variant:

SearchDiskFiles::SearchDiskFiles(SearchDiskFilesWorkList &worklist, const QRegularExpression ®exp, const bool includeBinaryFiles)

: m_worklist(worklist)

, m_regExp(regexp)

, m_includeBinaryFiles(includeBinaryFiles)and now the new code:

SearchDiskFiles::SearchDiskFiles(SearchDiskFilesWorkList &worklist, const QRegularExpression ®exp, const bool includeBinaryFiles)

: m_worklist(worklist)

, m_regExp(regexp.pattern(), regexp.patternOptions()) // we WANT to kill the sharing, ELSE WE LOCK US DEAD!

, m_includeBinaryFiles(includeBinaryFiles)This and removing of the QMimeData lookup (replaced with some trivial heuristic if we read binary crap from the file during matching) reduces the runtime of the search to ~0.6 seconds.

In the perf profile of this improved version below, even the locking involved to emit the queued signals to the main thread is more visible than the most other parts.

Smaller searches like something in the kate.git clone are now more or less instantaneous, e.g around 0.1 seconds.

For sure, as the profile shows, there are more things one can tune, but all in all, I think the current state is awesome. We are now comparable to other modern editors again speed wise for the multi-file searching.

Wall of text? perf? I want a demo!

On the left side: the 20.12 release version.

On right side: the new stuff ;=)

Guess which variant is faster is easy to spot.

Enjoy ;=)

Help wanted!

You want more nifty stuff? More speed? More of everything?

Show up and contribute.

We have a lot of ideas but only a limited number of minions (I mean valuable contributors) to implement them :)

Comments?

A matching thread for this can be found here on r/KDE.